AI is coming for radiology. Are women safe? 12 signs hospitals are already replacing human specialists

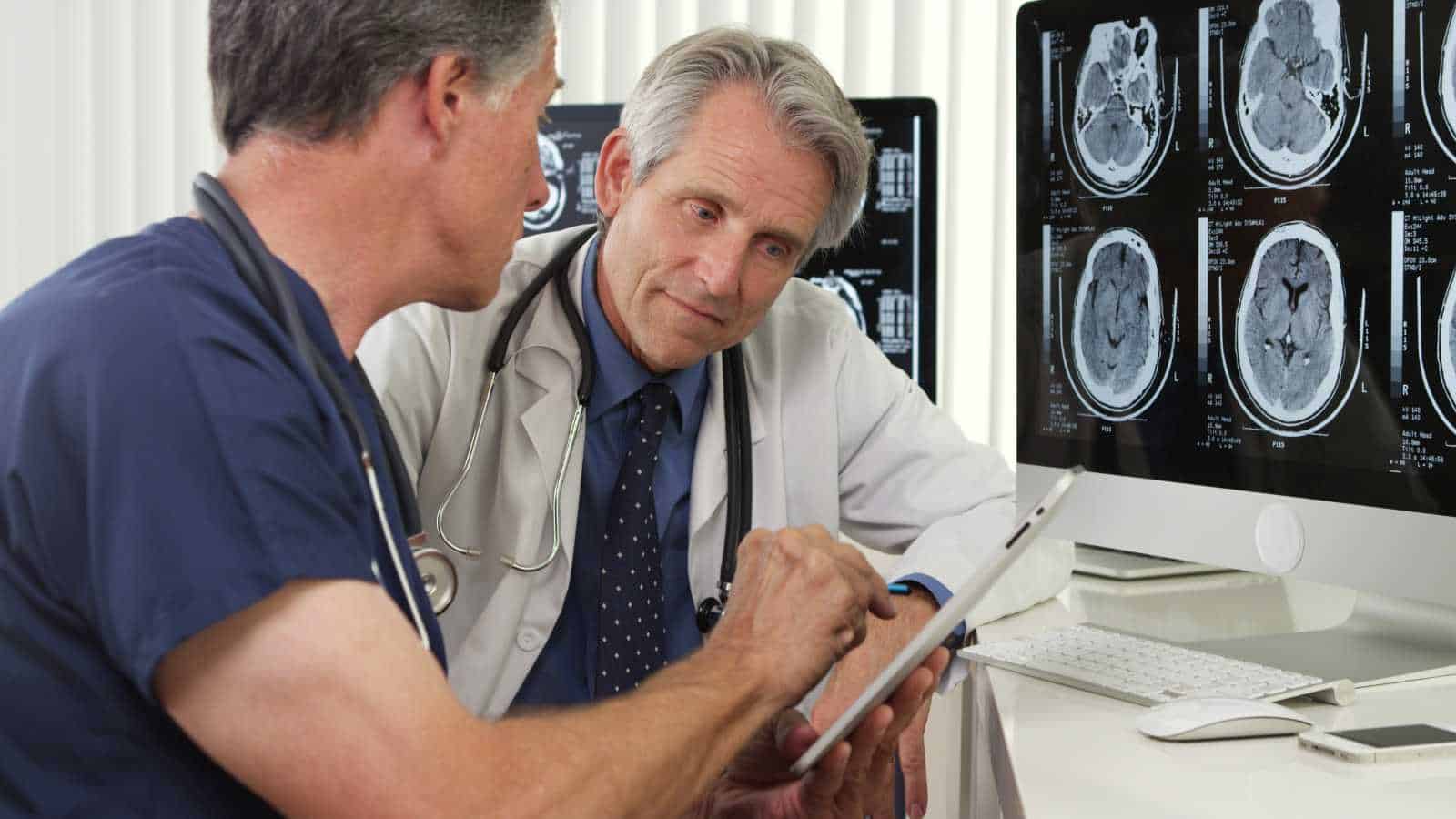

Hospitals are entering a phase in which artificial intelligence is no longer an experimental tool but an operational necessity. Across healthcare systems, imaging demand has expanded multiple times over the past two decades, creating structural pressure that traditional radiology workflows struggle to absorb.

At the same time, AI systems are now demonstrating performance levels in imaging tasks that approach expert human interpretation in controlled clinical studies, making them viable for integration into routine diagnostic pipelines.

This technological shift is unfolding within a workforce that is not evenly distributed across its development layers. Women remain underrepresented in artificial intelligence research and engineering roles, accounting for roughly a quarter or less of the technical workforce, depending on the region and definition used. This imbalance does not determine outcomes on its own, but it shapes the environments in which clinical tools are designed, trained, and optimized.

The result is not a sudden replacement of medical specialists, but a gradual reconfiguration of how diagnostic work is organized, delegated, and evaluated inside modern healthcare systems.

The Separation of Image Capture and Interpretation Is Accelerating

For decades, radiology operated as an integrated practice in which imaging and interpretation were closely linked within the hospital. That structure is now shifting. Hospitals are increasingly separating image capture from analysis, enabled by AI systems that route scans across networks for rapid review. Radiologic technologists remain responsible for patient-facing tasks and image acquisition, while interpretation is distributed across digital platforms where algorithms often perform initial reads before a radiologist intervenes.

This unbundling is reinforced by economic incentives. A report by the Harvey L. Neiman Health Policy Institute highlights rising imaging volumes and payment structures that reward throughput. AI-assisted pre-reading enables routine cases, such as standard chest X-rays, to be processed at scale, reducing the need for direct physician involvement in the early stages. The result is a more industrial model of radiology, where efficiency and speed take precedence over integrated consultation.

This shift also alters professional dynamics. Subspecialties that rely on patient interaction and contextual judgment, including breast and pediatric imaging, risk being de-emphasized as interpretation becomes standardized and remote.

As Curtis Langlotz has observed, the competitive advantage is shifting toward those who can effectively work alongside AI systems. In practice, this often reframes the radiologist’s role from that of a primary decision-maker to that of a verifier of algorithmic outputs. Over time, this redistribution of tasks may compress traditional career pathways and reshape where influence and specialization sit within the field.

AI Is Standardizing What “A Good Image” Looks Like

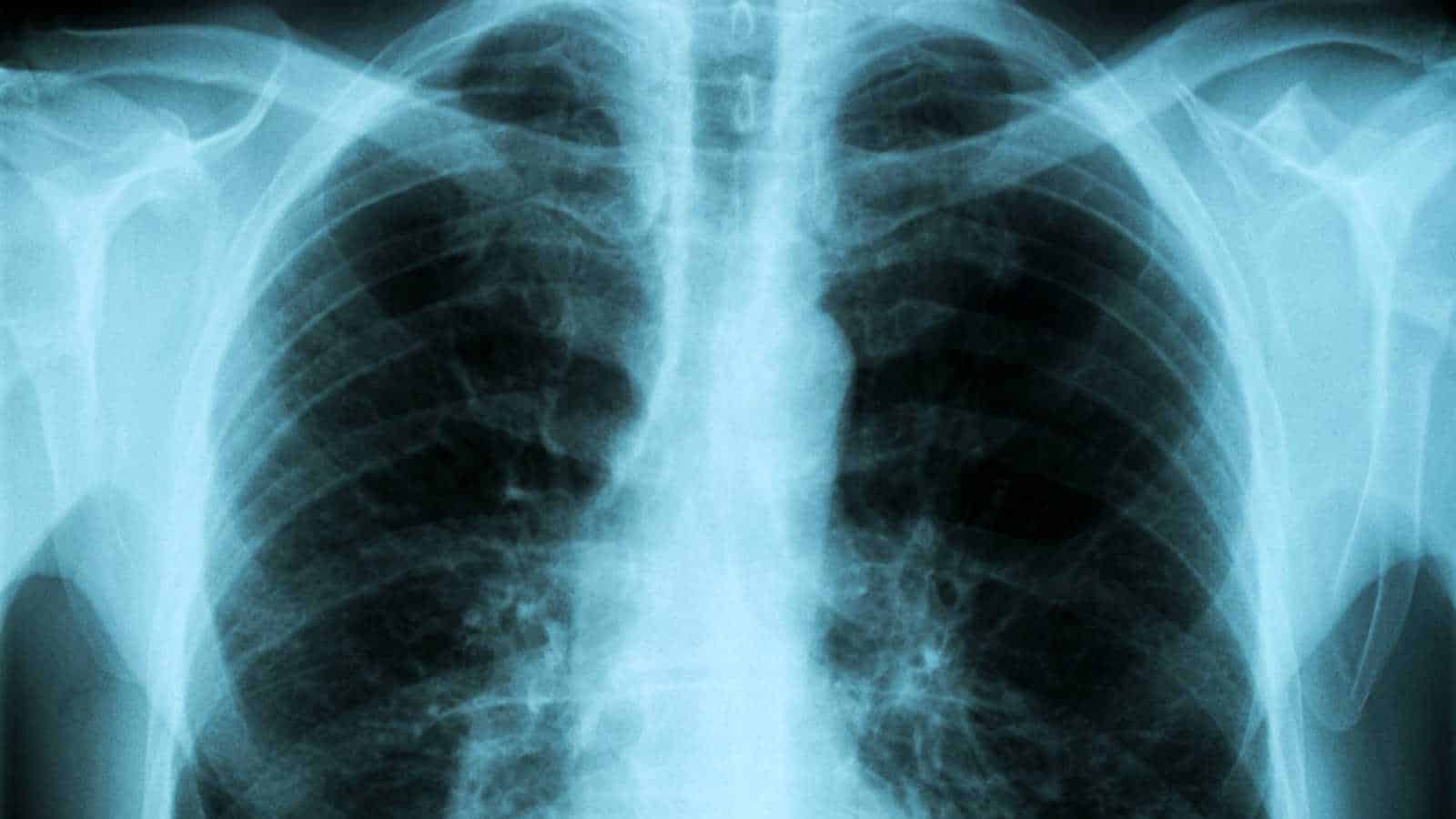

The subjective eye of the senior radiologist is being traded for the neural network’s objective threshold. AI systems are now the primary gatekeepers of image quality, flagging scans that don’t meet strict mathematical parameters for clarity and contrast.

While this reduces variability, it introduces a dangerous biological bias. Most foundational datasets used to train these systems, such as the NIH ChestX-ray14 dataset, have historically lacked granular demographic labels, leading to a “male-by-default” baseline.

If the algorithm’s definition of a clear image is optimized for a 180lb male torso, the natural variations in female breast tissue or smaller bone density can be interpreted as noise or sub-optimal artifacts.

A study published in The Lancet Digital Health revealed that AI models can accurately predict a patient’s self-reported race and biological sex from X-rays that appear identical to human eyes, yet these models often perform worse on female-specific pathologies when those features deviate from the standard training norm.

For women, this means a higher likelihood of a scan being rejected by an automated preprocessor or, worse, of a subtle but vital detail being smoothed over by an AI trying to make the image look like a textbook male example.

Hospitals Are Quietly Reducing Time Per Scan

Efficiency is the new North Star of the modern imaging department. With AI tools now capable of automating mundane tasks such as bone age assessment and lung nodule detection, hospital administrators are tightening the allocated time per read.

The logic is simple: if the machine does the prep, the human should finish faster. This pressure to be productive is often measured in Relative Value Units (RVUs), a metric that has become the bane of the empathetic clinician. As The Medical Futurist, Dr. Bertalan Meskó, observes, the rush to maximize scans per hour leaves zero room for the nuanced interpretation that complex cases require.

This fast-food approach to diagnostics disproportionately penalizes female patients. Medical literature, including research from the American Heart Association, consistently shows that women present with atypical symptoms for conditions like myocardial infarction or certain pulmonary issues. When an AI-driven workflow forces a radiologist to scan for patterns at a breakneck pace, the subtle clues of a female-specific presentation are the first things to be missed.

The machine is looking for the 90% probability; it isn’t designed to linger on the 10% of cases that don’t fit the mold. This speed-trap effectively turns the diagnostic process into a search for the most likely rather than the most accurate, a shift that erodes the safety net for anyone who isn’t a statistical average.

Junior Radiology Roles Are Being Compressed

The traditional apprenticeship of the radiology resident, spending hours on normal scans to build an instinct for the abnormal, is being short-circuited. AI is now taking over the first reads and routine cases (such as simple fractures or clear lungs), which were historically the training ground for junior doctors. This creates a massive bottleneck at the early-career stage.

According to data from the Association of American Medical Colleges, women have been entering radiology residencies at increasing rates, yet they are entering a field where the ladder is losing its bottom rungs.

Without the volume of routine work to hone their skills, junior specialists are forced to jump straight into complex, high-stakes cases where they must compete with or oversee sophisticated AI. This compression of the role removes the buffer zone where confidence is built.

This might actually democratize the field by removing grunt work. However, the reality is more stark: when you remove the routine, you remove the entry point. For a demographic that already faces systemic hurdles in reaching senior leadership, the disappearance of these foundational roles isn’t just a change in workflow; it’s a removal of the path upward.

Diagnostic Confidence Is Being Quantified by Algorithms

We are moving into an era where a doctor’s gut feeling is replaced by an AI-generated Confidence Score. When a scan is processed, the software outputs a probability: ‘’88% likelihood of malignancy’’. This number carries immense weight. Clinicians are increasingly conditioned to rely on these outputs when making escalation decisions.

If the score is high, they biopsy; if it’s low, they monitor. But what if the score is inherently flawed? Research from MIT’s Computer Science and Artificial Intelligence Laboratory has shown that AI confidence can be unreliable, particularly for subgroups underrepresented during training.

If an algorithm underfits female anatomy, meaning it hasn’t learned the specific patterns of female disease as well as those of males, the confidence scores for women will be lower or more erratic. A radiologist seeing a “low confidence” score on a female patient’s scan might be less likely to advocate for a second opinion or an expensive MRI, assuming the machine knows there’s nothing there.

The algorithm’s uncertainty is mistaken for the patient’s health, and because the machine’s authority is rarely questioned in a high-volume environment, the human specialist becomes a passive observer of a statistical bias they cannot see.

Triage Systems Decide Who Gets Seen First

In a crowded ER, the AI is the silent dispatcher. Triage algorithms scan the worklist and move life-threatening cases to the top. While this sounds like a victory for patient safety, the logic of urgency is often predicated on male-centric data.

For example, an AI prioritizing acute findings may be trained to recognize specific calcification patterns or vascular signatures that are common in men. If a woman’s emergency presents with radiologic markers that differ from those typically seen in male patients, such as subtle ground-glass opacities sometimes seen in female-specific inflammatory responses, the AI may relegate her scan to the bottom of the pile.

Dr. Ruha Benjamin, author of Race After Technology, highlights how automated systems often encode inequity. In radiology, this manifests as a mis-ranking of urgency. A woman with a life-threatening but atypical presentation might sit in a digital queue for 4 hours because the AI didn’t recognize the classic signs it was trained to prioritize.

Numbers from the Journal of the American Heart Association indicate that women already face longer wait times for diagnoses in cardiovascular and neurological emergencies; by delegating the order of operations to an algorithm, hospitals risk cementing these delays into the very infrastructure of the building.

Liability Is Staying Human While Authority Is Shifting to AI

This is the great paradox of the modern hospital: the AI makes the suggestion, but the human signs the legal document. The authority to influence a diagnosis is shifting to algorithms, while liability remains firmly with the radiologist. The physicians, therefore, are hesitant to disagree with a machine’s output for fear that, should they be wrong, they will be held solely accountable for ignoring a data-driven insight.

In this high-pressure environment, the ways clinicians trust AI can vary. Some studies suggest that in high-stress, male-dominated professional environments, there is a tendency to defer to technical or hard data (the AI) over soft clinical intuition.

For women in the field, who may already feel that their clinical judgment is more closely scrutinized than that of their male counterparts, the pressure to conform to the AI’s opinion is immense. They are being turned into overseers of a system they didn’t build, responsible for mistakes they didn’t make, and stripped of the autonomy that once defined the profession.

Global Teleradiology Is Expanding Faster Than Local Hiring

The Digital Nomad has arrived in radiology. AI enables seamless transmission and pre-processing of images across borders, allowing a hospital in Chicago to have its scans read by a teleradiology firm in a different time zone.

By outsourcing interpretation, hospitals can bypass the costs of local hiring, benefits, and workplace protections. While some argue this democratizes access to specialists, the American College of Radiology has raised concerns about the commoditization of the specialty.

For women in the workforce, this shift is a double-edged sword. On one hand, teleradiology offers flexibility: the ability to work from home and manage childcare. On the other hand, it intensifies a race to the bottom in terms of pay. When your competitor is an AI-augmented reader in a lower-cost-of-living region, your bargaining power evaporates.

The opportunity structures are shifting toward a gig-economy model that favors those who can process the most volume at the lowest cost, a framework that has historically eroded the professional standing of women who may prioritize clinical depth and patient-facing time over raw digital output.

Normal Is Becoming a Statistical Construct

In AI, normal is not a biological reality; it is a statistical cluster. An algorithm defines a healthy lung by averaging millions of data points. If those data points are skewed: if 70% of them come from male patients, then the cluster representing normal moves away from the female average.

A study in Nature Machine Intelligence found that medical AI models often exhibit shortcut learning, in which they learn from data artifacts rather than from actual pathology.

If a dataset underrepresents variations in female anatomy, the risk of misclassification spikes. A healthy female breast with dense tissue might be flagged as abnormal simply because it is a statistical outlier compared to the standard (male-skewed) training set.

This leads to overdiagnosis, unnecessary biopsies, and psychological distress. Conversely, actual pathology in women might be missed because it falls within the machine’s overly broad definition of normal. When we let machines define the boundaries of health, we are letting them decide whose body is the standard and whose is a deviation.

Radiology Is Moving from Judgment to Pattern Matching

There is a fundamental difference between diagnosis and pattern matching. A diagnosis is a holistic conclusion drawn from a patient’s history, physical exam, and imaging. Pattern matching is what an AI does: it looks for a recurring set of pixels.

As hospitals rely more on the latter: the art of radiology, the ability to reason through a complex presentation, the art of radiology is being devalued. This is particularly dangerous for women, who, according to several studies, are more likely to present with non-standard symptoms across a range of diseases, from autoimmune disorders to lung cancer.

AI-driven pattern recognition struggles with these non-standard presentations because they are, by definition, patterns the machine hasn’t seen enough of to recognize as significant.

A human doctor might say, “This looks strange, let’s dig deeper.” An AI simply says, “No match found.” By shifting the field’s currency from human judgment to algorithmic matching, we are building a system inherently biased against anyone who doesn’t fit the standard pattern. The radiologist who trusts their eyes over the machine is becoming an endangered species.

Performance Metrics Are Replacing Experience as Currency

In the age of AI, your value to a hospital is often boiled down to two numbers: speed and accuracy (as measured against the AI). Experience: the decades of seeing the unseeable is becoming an invisible asset. If a senior radiologist takes twice as long as a junior radiologist because they are being more thorough, the hospital’s dashboard flags them as underperforming. As Cathy O’Neil argues in Weapons of Math Destruction, these “black box” metrics often hide deep-seated biases.

If the accuracy metrics themselves are based on biased AI ground truths, then evaluating a doctor’s performance becomes a hall of mirrors. Women in medicine already report higher rates of burnout and imposter syndrome due to systemic pressures; being measured against a machine that doesn’t account for the complexity of female cases only exacerbates this.

Promotions and reputations are increasingly tied to these skewed metrics, creating a world where the best radiologist is the one who agrees with the machine the fastest, not the one who takes the time to get it right for every patient.

The Definition of a Radiologist Is Already Changing

We are witnessing the evolution of the radiologist from an interpreter of images to an overseer of AI systems. This skill shift requires a new kind of literacy: technical, supervisory, and data-centric. While this evolution is inevitable, history shows that transition phases in high-tech industries often reinforce existing power structures. Those with the most access to new tools and training, often those already in positions of power, adapt the fastest, while others are left to manage the legacy systems.

The move toward Information Technology as the specialty’s core risks alienating those who entered the field for its clinical and human elements. As the role becomes more technical, it risks losing the very diversity it has fought for over decades.

The radiologist of the future may look more like a data scientist than a doctor, a shift that changes not just who does the work, but how we value human life on the other side of the screen. The question isn’t just “Are women safe?” It’s whether the human element of medicine can survive its own optimization.

Key Takeaways

- Radiology is not being replaced outright by AI; instead, it is being broken into separate layers of work (image capture, interpretation, validation), with different parts increasingly handled by machines, technologists, and clinicians.

- The main driver of change is system pressure rather than pure technological breakthrough: rising imaging volumes, staffing constraints, and efficiency incentives are pushing hospitals toward AI-assisted workflows.

- AI is beginning to influence clinical decisions without fully owning responsibility, shifting real operational authority toward algorithms while legal and professional accountability remains with human radiologists.

- Because AI systems learn from imperfect and uneven datasets, bias can emerge structurally rather than intentionally, leading to performance differences across patient groups even without explicit discrimination.

- The long-term transformation is not job disappearance but the redefinition of medical expertise, in which radiologists increasingly function as supervisors of automated systems rather than sole interpreters of medical images.

Disclaimer – This list is solely the author’s opinion based on research and publicly available information. It is not intended to be professional advice.

Like our content? Be sure to follow us