What Parental Control Apps Miss That Predators Exploit

Parental control apps are marketed as essential shields against online dangers, but a growing body of research, litigation, and expert analysis reveals that these tools contain significant blind spots when it comes to preventing child grooming and exploitation.

End-to-end encryption blocks monitoring of private conversations where grooming most commonly occurs. Children routinely bypass controls using VPNs, secondary accounts, and factory resets. Platform-hopping from gaming environments to unmonitored messaging apps allows predators to evade detection entirely.

Even the built-in safety features of major social platforms have been found largely ineffective — Meta’s own internal research concluded that parental controls have “minimal impact” on teen behavior. Meanwhile, the National Center for Missing & Exploited Children (NCMEC) reported 546,000 online enticement reports in 2024, a 192% increase over 2023, and Thorn’s research found that one in three boys aged 9–12 experienced an online sexual interaction in 2024.

This report catalogs the specific technical, structural, and design-level gaps in the most widely used parental control apps and platform safety tools, drawing on primary sources including peer-reviewed research, government data, nonprofit investigations, and court testimony.

The Encryption Wall: The Biggest Blind Spot

The most critical limitation across virtually all parental control apps is their inability to monitor end-to-end encrypted (E2EE) communications. WhatsApp, iMessage, Telegram, Signal, and Facebook Messenger’s “Secret Conversations” all use encryption that scrambles messages so only the sender and recipient can read them. This means no third-party parental control app — not Bark, Qustodio, Net Nanny, or any other — can read the content of these messages.

This matters enormously because grooming frequently occurs in direct messages. According to the Human Trafficking Front, encrypted messaging apps have “enabled criminals to abuse children without repercussions” by eliminating the ability of platforms and law enforcement to detect solicitation. Approximately 89% of sexual solicitations of children occur in chatrooms or through instant messaging. When a predator moves a child’s conversation from a public gaming chat into an encrypted DM on WhatsApp or Telegram, every parental control app in existence is rendered blind.

Even the EU’s proposed “Chat Control” legislation, which would require client-side scanning of messages before encryption, has been criticized by researchers at the Max Planck Institute as producing massive false-positive rates while failing to meaningfully protect children — and potentially driving minors to less secure, unmonitored platforms operated by malicious actors.

Disappearing Messages: Grooming Without a Trace

A closely related gap is the proliferation of disappearing-message features across the apps children use most. Snapchat’s core design revolves around messages that vanish after viewing. Instagram offers “Vanish Mode.” WhatsApp and Telegram provide auto-delete timers.

These features are particularly dangerous in the context of grooming because they eliminate the evidence trail. The Child Rescue Coalition warns that Snapchat’s disappearing messages “make it easier for predators to groom and exploit children without leaving a trace” and “encourage risky behavior” while making it “harder for parents or authorities to detect and intervene”.

Critically, Snapchat’s own Family Center parental controls do not let parents view their child’s private chats. Worse still, children can unilaterally remove a parent’s linked account from Family Center at any time, and Snapchat will not notify the parent.

In an especially troubling design choice documented in a Fairplay investigation, Meta implemented an animated reward that incentivizes underage users to activate Disappearing Messages on Instagram. Researchers noted that “Disappearing Messages can be used for grooming, drug sales, etc., and leave the minor account with no recourse”.

Children Routinely Bypass Controls

No parental control product on the market is foolproof. Cybersecurity educator The White Hatter states plainly: “Even the most advanced apps can often be bypassed by tech-savvy youth”. The methods children use to circumvent controls are well-documented:

- VPN apps: Virtual Private Networks mask internet activity and render many parental filtering systems useless. Free VPNs are widely available, and children as young as eight or nine share VPN access details at school. A Childnet study found that 16% of children who used VPNs did so specifically to circumvent parental controls.

- Factory resets: Some children simply reset devices to factory settings, wiping all parental controls in one step.

- Phantom accounts: Creating hidden user accounts on devices allows children to operate freely on a secret profile while parents monitor a decoy account.[19]

- Web proxies: These services route traffic through unblocked servers, slipping past content filters.

- Exploiting bugs: Children search online forums and YouTube tutorials for vulnerabilities in parental control software.

- Creative workarounds: Adjusting device clocks to extend screen-time limits, using Google Docs as a chat platform, or finding backdoors to blocked apps through other apps (e.g., accessing TikTok via Pinterest).

Screen Time Consultant Emily Cherkin, who advises families on digital safety, concludes: “If parental controls worked, I would not be asked this question regularly, I would not have private coaching clients, and I would not hear story after story about how kids got around the controls”.

Platform-Hopping: The Off-Platform Pivot

One of the most dangerous patterns in online grooming is what researchers call the “off-platform pivot.” The grooming process typically begins on a public, moderated platform — often a game like Roblox or Fortnite — and then migrates to a private, less moderated space like Discord, Snapchat, or Telegram.

The Discord Pipeline

The Child Sexual Exploitation Institute (CSE Institute) published a detailed analysis in February 2026 describing how Discord’s design makes it “particularly well-suited to conceal and perpetrate exploitation.” Discord’s invite-only servers are invisible to outsiders, and direct messages provide predators privacy to “isolate minors and accelerate grooming in spaces with no meaningful oversight”.

The typical pattern documented in multiple legal proceedings: initial contact on a gaming platform → conversation moves to Discord → private messaging enables coercion and exploitation. In Texas, a filing describes initial contact on Roblox at age ten, then a move to Discord before an alleged assault. In California, a ten-year-old was groomed and abducted after meeting an adult on Roblox, with Discord facilitating continued manipulation.

Predators use voice-altering technology on Discord to pose as the victim’s peers, and offer in-game currency or emotional validation to manipulate minors. Discord’s direct message filtering for minors defaults to a setting that does not screen communications from users labeled as “friends”, and since predators build “friend” status early, this protection is largely ineffective.

Gaming Voice Chat

Most parental control apps cannot monitor in-game voice chat at all. Predators exploit this by posing as near-age peers in voice chat, gradually pushing boundaries, and encouraging secrecy (“don’t tell your parents”). Even in games where parental controls exist, conversations that have already migrated off-platform are beyond the reach of any monitoring tool.

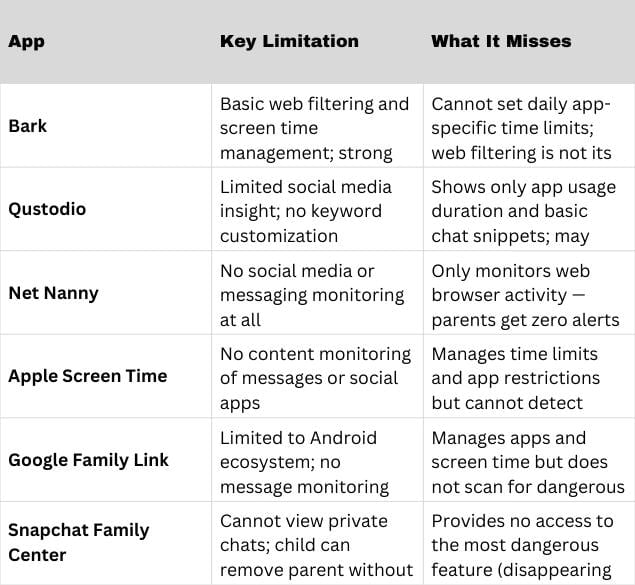

App-by-App Gaps in Popular Parental Controls

Each of the most widely recommended parental control apps has specific, documented blind spots that parents are rarely told about:

None of these apps can read end-to-end encrypted messages. None can monitor in-game voice chat. None can follow a conversation that moves from one platform to another.

Instagram’s “Teen Accounts”: A Case Study in Platform Failure

In September 2024, Meta launched Instagram “Teen Accounts,” automatically placing users under 18 into accounts with enhanced safety settings. Meta promised “built-in protections for teens, peace of mind for parents.”

A coalition including Fairplay, the HEAT Initiative, and Design It for Us systematically tested all 47 of Meta’s claimed safety features for teens. The results were stark:

- Only 17% (8 tools) worked as advertised with no limitations

- 19% reduced harm but had notable limitations

- 64% (30 tools) were either ineffective or no longer available

Among the specific failures documented:

- Adults remained able to message teenagers who didn’t follow them

- Instagram’s algorithm continued recommending sexual content, violent content, and self-harm content to teens despite “sensitive content” filters

- Children under 13 were actively using the platform (despite Meta’s ban), and Instagram’s recommendation algorithm “actively incentivized children under 13 to perform risky sexualized behaviors”

- A former senior Meta engineering leader, Arturo Bejar, stated: “The minors didn’t begin that way, but the product design taught them that. At that point, Instagram itself becomes the groomer”

Nearly 60% of teens aged 13–15 reported encountering unsafe content and unwanted messages on Instagram during a six-month period, and nearly 40% of those who received unwanted messages said they came from someone wanting a sexual or romantic relationship.

Meta’s Own Research: Project MYST

Perhaps the most damning evidence comes from Meta itself. An internal study called “Project MYST” (Meta and Youth Social Emotional Trends), conducted in partnership with the University of Chicago and surveying 1,000 teens and their parents, concluded that “parental and household factors have little association with teens’ reported levels of attentiveness to their social media use”.

In plain terms: parental controls, time limits, and household rules had no measurable effect on whether a teen would overuse or compulsively engage with social media. Both parents and teens agreed on this finding. The study also found that teens experiencing stressful life events, the very children most vulnerable to grooming, were even less able to moderate their usage.

These findings were revealed during testimony in a social media addiction trial in Los Angeles County Superior Court. The plaintiff’s lawyer argued that Meta’s own data proved the company’s built-in parental tools were functionally useless, yet Meta continued marketing them as safety solutions.

Be sure to read: These are the Apps and Platforms Law-Enforcement Says Predators Often Use to Contact Children

Sideloaded “Parental Control” Apps: Stalkerware in Disguise

Parents who turn to unofficial or “sideloaded” parental control apps (downloaded outside official app stores) face an entirely different category of risk. A peer-reviewed study by UCL and St. Pölten University of Applied Sciences, published in the Proceedings on Privacy Enhancing Technologies, found alarming results:

- 8 out of 20 sideloaded apps were flagged for stalkerware indicators

- 3 transmitted sensitive data (including children’s data) unencrypted

- Half lacked any privacy policy

- Apps hid their presence on devices — a practice prohibited in official app stores

- Features included intercepting messages from dating apps, taking remote screenshots, reading messages, and even listening to live phone calls

Lead researcher Dr. Leonie Tanczer of UCL stated: “Once you start removing the safeguards that official store apps are required to have, it is a slippery slope from legitimate use to unethical surveillance or, in extreme cases, domestic abuse”. Researchers also noted that some apps previously marketed for catching cheating spouses had simply been rebranded as “parental control” tools.

The Scale of the Threat These Gaps Enable

The gaps documented above exist against a backdrop of rapidly escalating online child exploitation:

- NCMEC’s CyberTipline received reports reflecting 29.2 million separate incidents of child sexual exploitation in 2024. Online enticement reports reached 546,000 — a 192% increase over 2023.

- In the first half of 2025, NCMEC reported that online enticement jumped from 292,951 to 518,720 compared to the same period in 2024, a 77% increase. AI-generated exploitation reports soared from 6,835 to 440,419.

- Thorn’s 2024 research found 33% of boys aged 9–12 reported an online sexual interaction — the highest rate in five years of data collection. Being sent sexual messages increased by 12 percentage points among this group, and being asked to send nude photos rose by 9 percentage points.

- One in five minors who experienced an online sexual interaction told no one about it. Only 62% turned to a trusted adult — down 9 points from the previous year.

- An estimated 500,000 online predators are active daily, and approximately 82% of child sex crimes begin with contact on social media platforms.

Structural Misalignment: Why Controls Stay Weak

A fundamental tension underlies all of these gaps. Screen Time Consultant Emily Cherkin and other researchers point out that effective parental controls would reduce the engagement metrics that drive platform revenue — creating a structural disincentive for tech companies to build truly effective safety tools.

The Children’s Online Privacy Protection Act (COPPA) of 1998 requires companies to make “some effort” to protect underage users, but companies are widely reported to be doing the bare minimum. In multiple congressional hearings, tech executives have deflected responsibility for child safety onto parents, while their own internal research (as with Project MYST) shows that the parental tools they provide are ineffective.

The Fairplay report concluded that “platform self-regulation by Meta is insufficient to protect teens using Instagram” and recommended legislative solutions, including passage of the Kids Online Safety Act (KOSA).

What Parents Should Understand

Given these documented limitations, cybersecurity and child-safety experts consistently recommend that parental controls be treated as one layer of a multi-layered approach — not as a standalone solution. Key recommendations from authoritative sources include:

- Recognize that no app can replace communication. Open, judgment-free conversations about online experiences are more protective than any software tool.

- Watch for the off-platform pivot. Phrases like “add me on Snap,” “what’s your Discord,” or “join my server” are early red flags that a conversation is moving to an unmonitored space.

- Consider purpose-built devices for younger children. Minimalist phones like Wisephone or Pinwheel strip away web browsers, social media, and app stores entirely — eliminating the bypass problem.

- Layer controls together. Using Bark’s social monitoring alongside Apple Screen Time’s restrictions, for example, covers more gaps than either tool alone.

- Audit platform-specific settings regularly. Discord, Roblox, Fortnite, and Snapchat all have safety settings that default to weaker protections, requiring manual tightening.

- Know what your tools cannot see. If a child uses WhatsApp, Signal, or Telegram, no parental control app will read those messages. If they play games with voice chat, no app monitors that either.

- Report immediately. NCMEC’s CyberTipline (CyberTipline.org) and the FBI’s IC3 are the primary reporting mechanisms for suspected online exploitation of minors.

- Parental control ineffective to curb kids’ social media addiction

- Parental controls don’t curb social media use by teens: Meta

- REPORT: 1 in 3 Boys Age 9-12 Reported Online Sexual Interactions

- CyberTipline Data – MissingKids.org

- 2024 Youth Perspectives data shows urgent youth threat – Thorn

- Are there apps to monitor secret conversations?

- What is encryption and how does it impact children’s online safety?

- Encrypted Message Apps and Child Safety | Human Trafficking Front

- Research – SafeNet Guardians

- “More monitoring, but not more protection” – MPG

- The Dangers of Disappearing Messages: What Parents Need to Know

- 5 Steps to Staying Safe on Snapchat

- Parent’s Guide to Making SnapChat Safe for Teens – Tech Lockdown

- Snapchat Parental Controls: What They Can (and Can’t) Do – Gabb

- [PDF] Teen-Accounts-Broken-Promises-How-Instagram-is-failing-to

- Whack-A-Mole: Why Parental Control Apps Often Fail and What A

- How VPNs Let Kids Bypass Parental Controls – LinkedIn

- [PDF] Young people’s use of VPNs | Childnet

- How Kids Bypass Parental Controls in 2024: A Parent’s Guide

- Why Parental Control Apps Are Not Enough to Keep Our Kids Safe

- 5 Reasons Parental Controls Will Fail You

- Built for Connection, Vulnerable to Exploitation: The Case of Discord

- Grooming in Kids’ Games: What’s New, What It Looks Like, and How

- Kids and Gaming: What Parents Don’t Realize About In-Game Chats

- 2026 Best Parental Control: Bark vs Qustodio vs Net Nanny – AirDroid

- Family Guide to Parental Controls – ConnectSafely

- How To Use Parental Controls To Keep Your Kid Safer Online – FTC

- Report: Instagram Still Rife With Safety Issues For Teens | TIME

- Instagram’s Teen Accounts: A Report on Safety Failures – LinkedIn

- Teen Accounts, Broken Promises: How Instagram is Failing to

- Metas Project MYST reveals parental controls ineffective for teen

- Unofficial parental control apps put children’s safety and privacy at risk

- Child Protection Apps Infringe on Privacy — USTP

- Unofficial parental control apps put children’s safety and privacy at risk

- Surge in Online Crimes Against Children Driven by AI and Evolving

- Discord Parental Controls Review – Is It Safe? | Protect Young Eyes

Disclosure: This article was researched with the assistance of AI.

Like our content? Be sure to follow us on MSN and Newsbreak